Let us see how Binary Cross Entropy (BCE) loss is implemented using Python and TensorFlow:

- Importing TensorFlow:

import tensorflow as tfThis line imports the TensorFlow library, which is a popular open-source machine-learning framework used for building and training neural networks.

- Simulated Data :

true_labels = [1, 0, 1, 1, 0]

predicted_probabilities = [0.8, 0.2, 0.6, 0.9, 0.3]Here, we have two lists: true_labels and predicted_probabilities. These lists contain simulated data. True_labels represent the actual labels for the binary classification task (1 for positive class, 0 for negative class), and predicted_probabilities represent the predicted probabilities of the positive class for each corresponding example.

- Calculating Binary Cross Entropy:

loss = tf.keras.losses.BinaryCrossentropy()

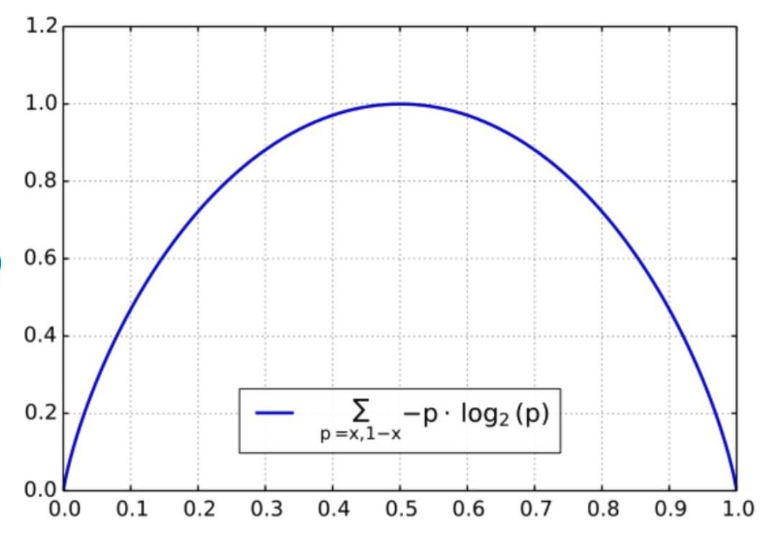

bce_loss = loss(true_labels, predicted_probabilities)We create an instance of the BinaryCrossentropy loss function using tf.keras.losses.BinaryCrossentropy(). This loss function is commonly used for binary classification tasks. It quantifies the difference between the true labels and predicted probabilities. Then, we calculate the BCE loss by calling the loss instance with the true_labels and predicted_probabilities as arguments. The result is stored in the variable bce_loss.

- Printing the Loss:

print("Binary Cross Entropy Loss:", bce_loss.numpy())Finally, we print out the calculated BCE loss using the .numpy() method, which extracts the numerical value from the TensorFlow tensor.

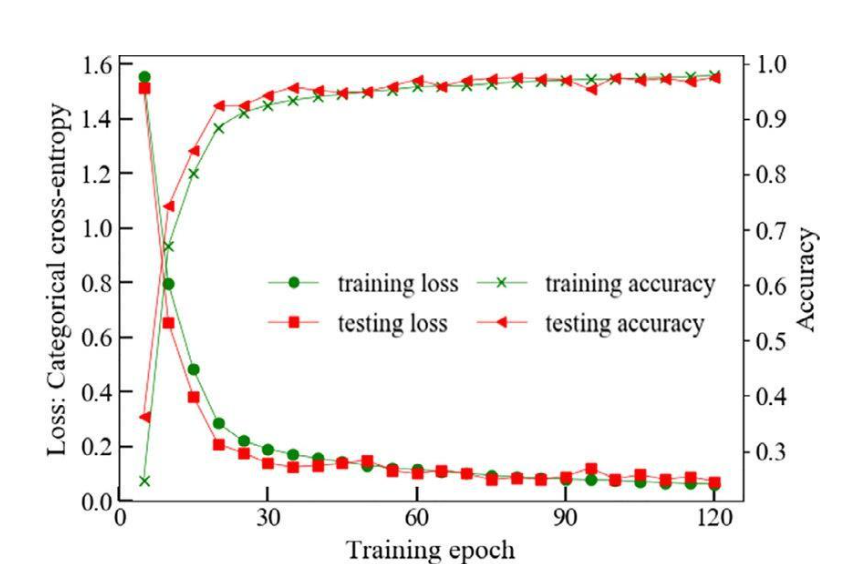

Code for Categorical Cross Entropy

Let us see how Categorical Cross Entropy (CCE) loss is implemented using Python and TensorFlow:

- Import TensorFlow:

Before you can use TensorFlow, you need to import it. TensorFlow is a popular machine-learning library that provides tools for building and training various types of neural network models.

import tensorflow as tf- Create Ground Truth Labels:

The ground truth labels are the true class labels for your training examples. In this example, we’re using a small toy dataset with three examples and three classes. The labels are represented in a one-hot encoded format, where each row corresponds to an example and each column represents a class. The value 1 in a row indicates the true class for that example.

ground_truth = tf.constant ([[0, 1, 0], [1, 0, 0],[0, 0, 1]],dtype = tf.float32)- Create Predicted Probabilities:

The predicted probabilities are the output of the model after applying a softmax activation function to the raw logits. These probabilities represent the model’s confidence in assigning each example to each class.

predicted_probs = tf.constant ([[0.2, 0.6, 0.2], [0.8, 0.1, 0.1 ],[0.3, 0.2, 0.5]] dtype= tf.float32)Calculate Categorical Cross-Entropy Loss:

The categorical cross-entropy loss measures the difference between the predicted probabilities and the ground truth labels. TensorFlow provides a convenient way to calculate this loss using the tf.keras.losses.CategoricalCrossentropy function.

categorical_cross_entropy = tf.keras.losses.CategoricalCrossentropy()

loss = categorical_cross_entropy(ground_truth, predicted_probs)- Print the Loss

After calculating the loss, you can print its value. The .numpy() method is used to extract the numerical value of the loss from the TensorFlow tensor.

print("Categorical Cross-Entropy Loss:", loss.numpy())